For a long time, I couldn’t get a basic diffusion LLM to converge, no matter what I tried. The idea was attractive; the results were wasted GPU compute and RAM. For some time, that made me believe the topic isn’t worth investigating, so I missed changes like LLaDA (GitHub, arXiv:2502.09992).

And then, not so long ago, I was in a friend’s place (hey there, Vasily, I know you’re reading this!). We both looked at the Inception Chat while I mentioned this issue again, and you can imagine my surprise when I saw they had a free tier to test.

I’ve tried OpenCode and saw it breaking. I wasn’t impressed, but wondered if it was breaking due to the lack of retries on sampling errors I’d noticed. Since I was just visiting, we agreed just to poke at Inception Chat and see what it could do. I tried the web version and asked it how it worked. And then, I got quick, clear responses with references to tons of research I’d skipped, for instance, MDLM.

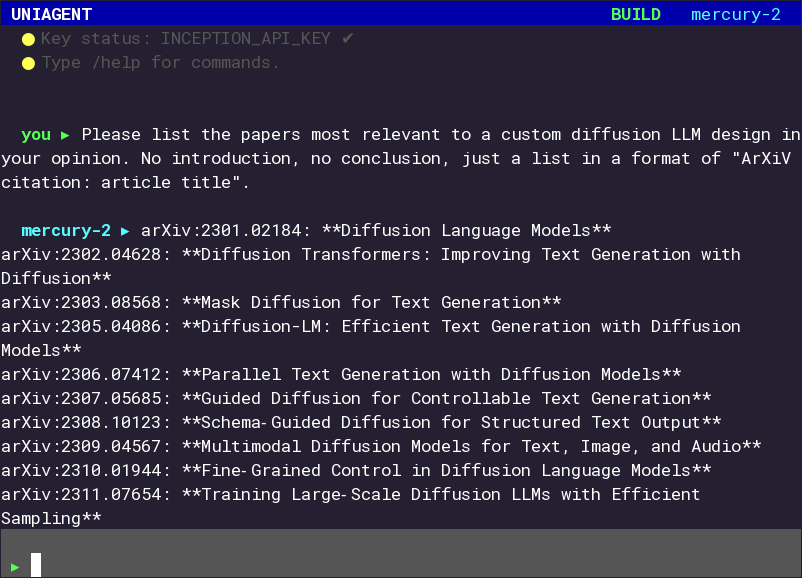

At this point, both of us were curious about Inception Mercury’s capabilities, and asked Claude Opus to code us an agent to probe them. It’s nothing fancy, just Python, Urwid and other basic things, but it did the job fine. To illustrate, here’s a sample output (includes hallucinated content):

It surprised us: once we started asking Mercury to write small projects, it delivered working, if imperfect, code in under a second. Nothing hard: a fortunes-style program, a Mandelbrot generator, but the speed was the point.

That makes me think of some techniques Anthropic suggested: “orchestrator-workers” from “Building effective agents” (in a common case, an orchestrator, a big model, splits tasks into pieces and gives small worker models pieces to work with, stitching them together), and, more importantly, “The advisor strategy” (the smaller model, an executor, controls the process, using the bigger one, an advisor to get its input on complex issues).

So the direction looks promising, though I have concerns. How does the

executor know when to ask for help? And wouldn’t the sheer

volume of context confuse even a stronger model? Not all LLMs handle

the needle-in-a-haystack problem well, and there are

benchmarks like BABILong (GitHub, arXiv:2406.10149) to

get a clearer picture.

That the advisor is the stronger long-context model probably helps; targeted reasoning over a curated context can cover much of the rest; and the executor itself frames what kind of help it needs.

I’ll stay confidently doubtful, since we still have no defense against failure modes like fabricated diseases. If frontier models uncritically absorbed a fabricated condition into their outputs, then “stronger advisor with curated context” clearly isn’t a correctness guarantee.

(Then again, theory sets the bounds; practice shows what actually holds.)

To scratch the itch, I trained a diffusion model with

tiny_shakespeare

on A100.

Prompt: 'ROMEO:'

temp=0.2: ROMEO:

Why, that thou hast done that thou hast done.

ROMEO:

Thou hast done that thou hast done.

ROMEO:

Thou hast done

temp=0.3: ROMEO:

Why, that thou hast done that thou hast done.

ROMEO:

Thou hast done that that thou hast done.

ROMEO:

Thou hast

temp=0.4: ROMEO:

What that thou hast done with thee with thee.

ROMEO:

Thou hast not tell thee with thee with thee love.

ROMEO:

T

temp=0.5: ROMEO:

Thou hast thou wilt thou hast thou love thee.

ROMEO:

Thou shalt have been thee in thy love.

ROMEO:

Thou wilt th

temp=0.7: ROMEO:

I pray, my liege, that they have not done.

ROMEO:

Nay, my lord, they have done.

ROMEO:

They have done, they hav

temp=1.0: ROMEO:

That will you have made your love?

ROMEO:

What name is not, and have your love.

ROMEO:

It is that love that wil

I’m glad my assumptions got broken. It happens often enough now that it’s worth auditing what I actually know versus what’s just learned helplessness.